Acquia Certification – the benefits of Drupal developer’s ultimate test

During the last year several Druids (myself included) have gotten certified by Acquia – sponsored by Druid, of course. Acquia Certification is the professional certification program for Drupal developers. Being a current benchmark in the technology, it verifies that the developer meets the standard and has an extensive expertise in the field.

I interviewed our recently certified developers, Sebastian, Markus, Robert, and Simo about their thoughts on the certification programs – Acquia Certified Developer and Acquia Certified Front End Specialist.

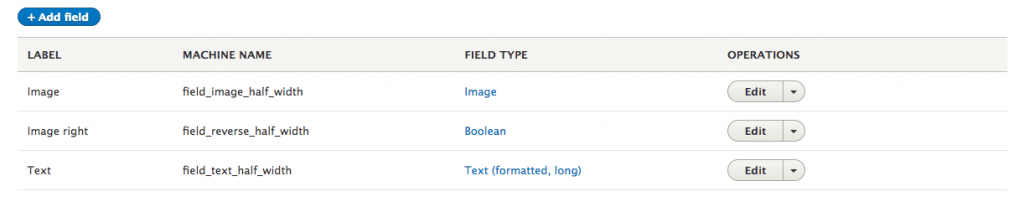

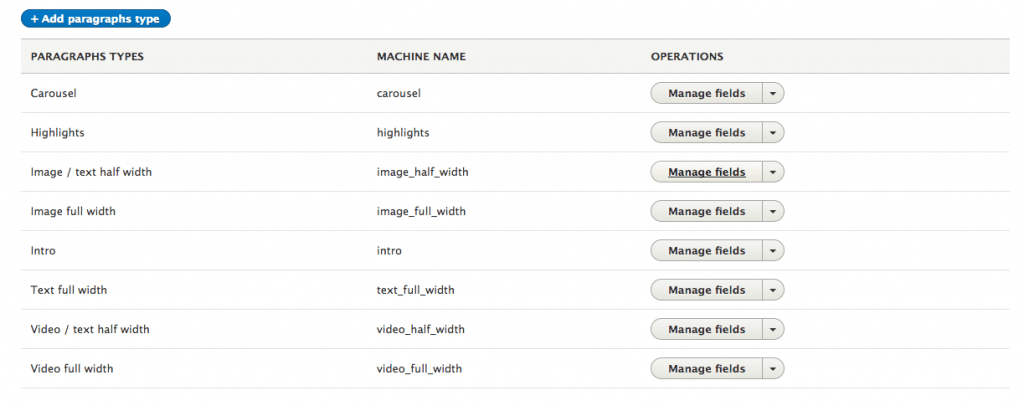

The Acquia Certified Developer certification is considered to be a more general exam that validates skills in the areas of Fundamental Web Concepts, Site Building, Front End Development (theming), and Back End Development (coding). The latter is more oriented on front-end technologies and the Drupal principles in this area.

Is the certification worth it?

If you ask us, the answers is yes, it’s definitely worth the effort. Everyone found the certification useful both for extending the knowledge and for demonstrating the level of expertise to customers and employers alike. Today, it’s very important to stand out in a competitive marketplace. In my view, this certification is an easy way to verify that your knowledge matches a certain standard.

“Now I’ve got a better understanding of my stronger and weaker points,” Robert said. “This exam became a good opportunity to get the broadest view of the technology and also to identify areas where I can improve.”

Simo added: “I’ve been working with Drupal for a very long time starting with Drupal 5, then 6, 7, 8, and now Drupal 9. The preparation for the exam helped me to check out the current best practices and to get away from the old ways of writing code used in earlier versions of Drupal.”

“This exam was more about the verification of what I actually know,” Markus said. “But it was a good experience.” Sebastian concluded that it was a good opportunity to demonstrate our proficiency to our customers. Indeed, some of our customers highly value this kind of confirmation about the level of Druid developers.

About the exam questions

The questions were based on real work experience and thus they were relevant to daily work, which everyone thought was great. Working with a wide range of projects, you bump into different kinds of problems and should quickly come up with solutions. If you’ve solved some problem before, you can easily find the correct answer in the exam. If not, it’s a good opportunity to learn more about the subject so you know how to approach the problem when you face it.

“There were some tricky questions at first glance, but if you’re to be qualified as an expert in the field, you should know the precise answer to it,” commented Sebastian.

Simo pointed out that coding standards are quite often neglected, and the exam questions remind you about them. Markus found it important that the security related knowledge was tested thoroughly.

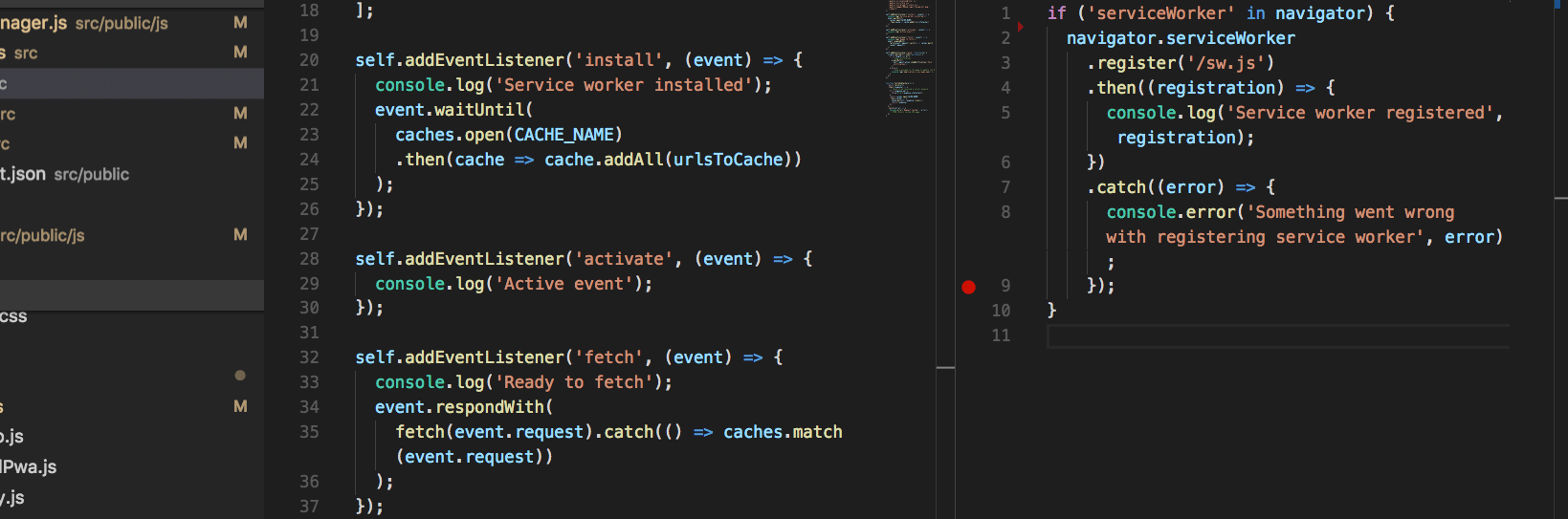

Personally I like that an essential part of each Acquia exam is Fundamental Web Technologies where your knowledge of JavaScript and other underlying techs is tested.

Boost in professional development

I think the exam preparation provides you with a comprehensive overview. You start seeing the big picture and you can find out some details you might have missed or have not worked with before. It’s also some source of motivation to explore more, to step beyond the theory and apply the learnings in the code. So in that sense I think the certification can help you become a better developer.

Both Sebastian and Robert thought that studying for the exam was probably the most beneficial part of the certification program. You can learn entirely new things. For example, I was surprised how much the Layout API and Layout Builder were improved in Drupal 9, and how much attention the Drupal community is now paying to accessibility.

“I’ve got a deeper understanding about caching systems in Drupal. Also the comprehensive study of Drupal API in general and in-depth look at backend concepts should be beneficial,” said Robert.

Markus pointed out that sometimes you’re more influenced by your peers than by any test as you learn from the actual building of the software, not from reading a book. But in both cases you promote yourself by applying new knowledge in your projects.

So if you’re into measuring your Drupal expertise…

We all definitely recommend the certification. If you’re planning to get certified, the tips from the study guides provided by Acquia come in handy.

Basically, you have two main ways of measuring your expertise – via experience and real life projects, or via certifications. According to the guys, there are often debates over whether IT certifications have some value or not, but in this case they admit the test is useful, especially for Drupal CMS where the learning curve is quite steep. They suggest pursuing something similar for Vue or React if you’re more focused on frontend for example, as this certification is naturally mostly focused on Drupal.

“While it’s good to verify your expertise by passing the exams, you should not forget about contribution to the community which is also one way to show your knowledge,” added Markus. “If you don’t contribute that much, certification is a good way.”

The certifications themselves do not prove that you’re the most talented developer in the world, but they definitely help your career, especially if you’re just in the beginning. They’ll also help you to get noticed by big companies who pay a lot of attention to your education and certifications. Besides, it never hurts to learn something new. So, I encourage you to just go for it!

Useful links:

General information about the certification:

https://www.acquia.com/support/training-certification/acquia-certification

Study guides for Acquia Drupal certifications:

https://docs.acquia.com/certification/study-guides/

You can find our certified developers in Acquia Certification Registry:

https://certification.acquia.com/?org=druid&exam=All